Proof of Concept at CeBIT

Many specialists, interested in the technological revolution taking place before our eyes, compete in providing statistics on the amount of data to be generated by mankind in the coming years. In this context, processing of such great volumes of information is becoming a serious challenge.

Some of the problems that should be addressed when performing operations on the so-called Big Data include:

- Lack of feasibility to record information on the classic relational databases, as well as carrying out analysis leading to important business information due to the lack of proper data structure

- The need for processing data streams “live”, which the existing Business Intelligence solutions cannot handle

- Storage of a large number of sources and amounts of data using Data Warehouse solutions becomes too expensive and inefficient

An important source from which information can be obtained (for example, concerning opinions about products and current trends), highly valued by marketing departments, are the social media. Natural language (especially in its everyday form), most commonly encountered at platforms such as Twitter and Facebook, turns out to be problematic in analysis. Nevertheless, given the importance of content appearing in social media, and increasing need for structuring information, processing of such data is now one of the fastest growing branches of generally conceived Big Data.

At Apollogic, we constantly monitor the market, looking for the best solutions which can be used in future projects. This is why, during this year’s edition of the CeBIT in Hannover, we conducted a PoC (Proof of Concept), designed to test the latest Big Data technologies, facilitating efficient processing of unstructured data.

The project aimed at analyzing Tweets appearing on the network during one of the largest technology fairs in the world. It was supposed to answer the question as to what tools and trends in IT are most ardently discussed, and to assess the tone of opinions.

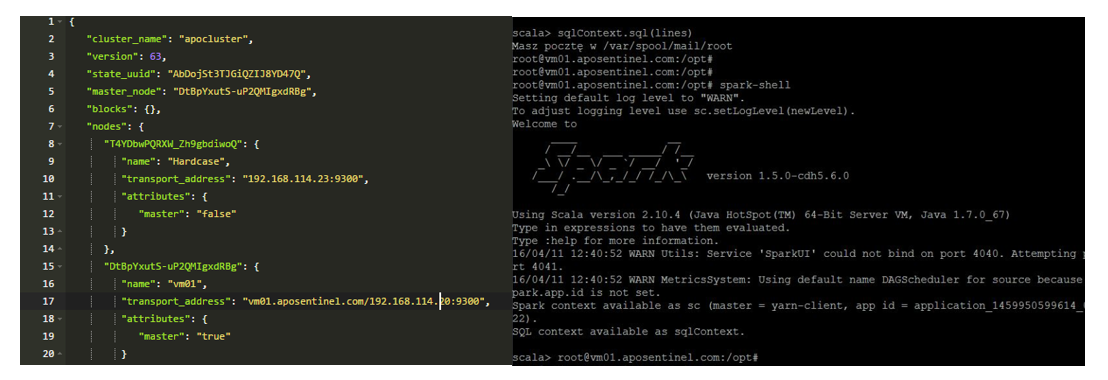

Nearly 50 thousands related posts were published on Twitter during the event. To carry out all the necessary operations, a cluster of computers was formed on which an environment was installed, composed of tools such as:

- Apache Spark

- Apache Hadoop

- Elasticsearch

This combination helped to obtain high performance and convenience in data processing.

What is particularly noteworthy is libraries offered by the Apache Spark tool. Spark Streaming enables to easily perform costly computer memory operations a stream of data. A representation of the data set, which Apache Spark allows to distribute among a large number of machines, is called RDD (Distributed Resilient Dataset). A graphical representation of the data Spark Streaming is well presented in the following figure:

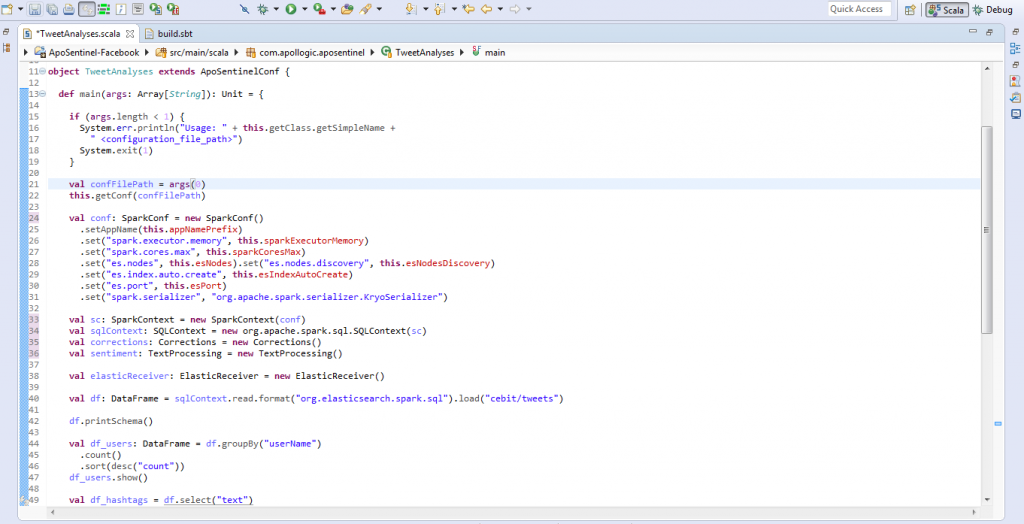

The DataFrame library appears to be very useful option, too. It is a collection of data that can be created on the basis of different sources, e.g. JSON, representing data in the form of a column. This enabled performing classic SQL queries. This significantly accelerates and facilitates the work on the data, and combining data from various sources.

With so ingeniously chosen set of tools, the project was carried out quickly and efficiently. However, processing natural language still leaves a lot of possibilities which, over time and with emergence of increasingly better solutions on the market, will certainly be used more effectively.

- On 15/04/2016

0 Comments